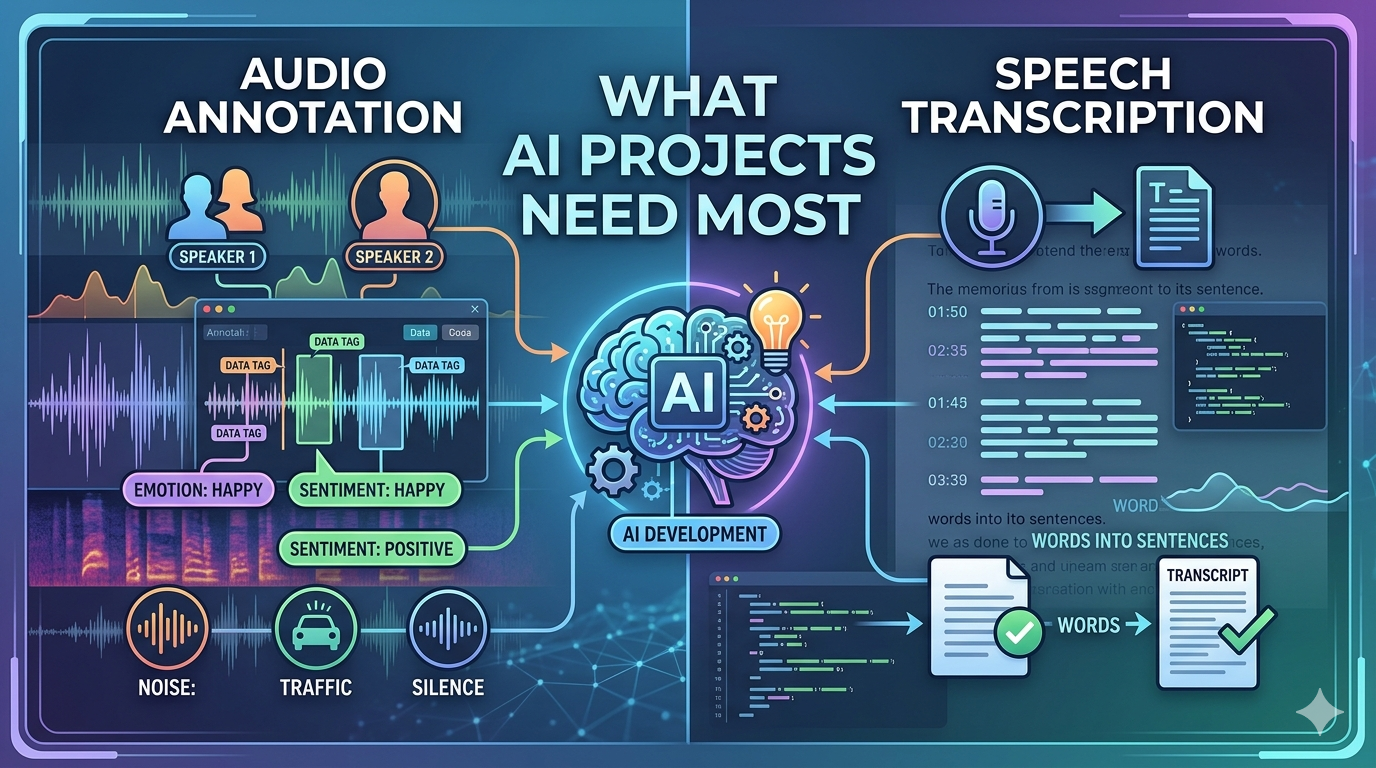

Audio Annotation vs Speech Transcription: What AI Projects Need Most

In the rapidly evolving landscape of artificial intelligence, high-quality data remains the backbone of successful model development. Among the most critical components of audio-based AI systems are audio annotation and speech transcription—two processes that are often misunderstood or used interchangeably.

For businesses working with a data annotation company or exploring data annotation outsourcing, understanding the distinction between these two approaches is essential. Each plays a unique role in training AI models, and choosing the right one can significantly impact performance, scalability, and accuracy.

At Annotera, we help organizations navigate this decision with precision. This article breaks down the differences, use cases, and strategic importance of audio annotation and speech transcription to help AI projects determine what they truly need.

Understanding Speech Transcription

Speech transcription is the process of converting spoken language into written text. It is a foundational task in AI, particularly for systems built around Automatic Speech Recognition (ASR).

In simple terms, transcription answers the question: “What is being said?”

Speech transcription is widely used in applications such as:

-

Voice assistants

-

Meeting summarization tools

-

Subtitling and captioning

-

Customer support analytics

High-quality transcription datasets are critical for training ASR models because they provide direct mappings between audio signals and textual output.

Key Characteristics of Speech Transcription

-

Focuses solely on spoken words

-

Produces clean, readable text output

-

Often includes punctuation and formatting

-

May involve verbatim or intelligent transcription styles

For AI projects that primarily need to interpret language, such as chatbots or voice search systems, speech transcription is indispensable.

What is Audio Annotation?

Audio annotation is a broader and more sophisticated process. It involves labeling audio data with multiple layers of metadata—not just text, but also contextual and acoustic information.

Audio annotation answers a more complex question: “What is happening in this audio?”

This process includes:

-

Speech-to-text transcription

-

Speaker identification (who is speaking)

-

Timestamping (when something occurs)

-

Sound event detection (e.g., sirens, background noise)

-

Emotion and sentiment tagging

Unlike transcription alone, audio annotation transforms raw audio into structured, machine-readable training data.

Key Characteristics of Audio Annotation

-

Multi-layered and context-rich

-

Includes both speech and non-speech elements

-

Supports advanced AI tasks beyond language recognition

-

Enables deeper understanding of audio environments

A professional audio annotation company like Annotera ensures that datasets are not only accurate but also aligned with the specific objectives of AI models.

Audio Annotation vs Speech Transcription: Core Differences

Although related, these processes serve distinct purposes in AI development.

| Aspect | Speech Transcription | Audio Annotation |

|---|---|---|

| Scope | Converts speech to text | Labels all audio elements |

| Complexity | Relatively simple | Multi-dimensional |

| Output | Text | Structured metadata + text |

| Use Case | ASR, subtitles | NLP, emotion AI, sound detection |

| Context Awareness | Limited | High |

One critical misconception is that audio annotation is simply transcription. In reality, transcription is just one component of the broader annotation workflow.

When AI Projects Need Speech Transcription Most

Certain AI applications rely heavily on textual accuracy rather than contextual audio understanding. In such cases, speech transcription is the primary requirement.

Ideal Use Cases

-

Automatic Speech Recognition (ASR) Systems

Models need large volumes of accurately transcribed audio-text pairs. -

Voice Search and Virtual Assistants

Systems like voice-enabled apps depend on converting speech into commands. -

Content Accessibility

Transcriptions enable indexing, searchability, and accessibility for audio/video content. -

Customer Interaction Analysis

Businesses analyze transcripts of calls to extract insights and improve service quality.

For these applications, investing in speech transcription services through data annotation outsourcing ensures scalability and cost efficiency.

When AI Projects Need Audio Annotation Most

For more advanced AI systems, transcription alone is insufficient. These projects require deeper insights into audio data—making audio annotation essential.

Ideal Use Cases

-

Emotion Recognition Systems

Detecting tone, sentiment, and emotional cues requires labeled metadata beyond words. -

Speaker Diarization and Identification

Systems must distinguish between multiple speakers and track conversations. -

Autonomous Systems and Surveillance

Recognizing environmental sounds (e.g., alarms, crashes) is critical. -

Multimodal AI Models

Combining audio with video or text requires rich annotations for context. -

Healthcare and Compliance AI

Identifying pauses, stress, or anomalies in speech can be crucial.

Audio annotation enables machines to interpret not just speech, but intent, environment, and interaction dynamics.

The Role of Data Annotation Companies

Choosing between transcription and annotation often depends on project complexity, but execution quality is equally important.

A specialized data annotation company like Annotera brings:

-

Domain-specific expertise

-

Scalable workforce for large datasets

-

Quality assurance pipelines

-

Custom annotation guidelines

Many organizations opt for data annotation outsourcing to reduce operational costs while maintaining high-quality output. This is particularly beneficial for startups and enterprises dealing with multilingual or large-scale datasets.

Similarly, partnering with an experienced audio annotation company ensures that even complex annotation tasks—such as emotion tagging or acoustic event detection—are handled with precision.

Why Most AI Projects Need Both

In practice, the choice is rarely binary. Most modern AI systems benefit from a combination of speech transcription and audio annotation.

Integrated Approach

-

Transcription provides linguistic clarity

-

Annotation adds contextual intelligence

For example:

-

A call center AI system may use transcription for conversation analysis and annotation for sentiment detection.

-

A voice assistant may rely on transcription for commands but require annotation for speaker identification and intent detection.

As AI models become more sophisticated, multi-layered datasets are becoming the standard rather than the exception.

Key Factors to Consider When Choosing

Before deciding what your AI project needs most, evaluate the following:

1. Project Objective

-

Language understanding → Transcription

-

Contextual audio intelligence → Annotation

2. Model Complexity

-

Basic NLP models → Transcription

-

Advanced AI systems → Annotation

3. Budget and Timeline

-

Transcription is generally faster and less expensive

-

Annotation requires more time and expertise

4. Scalability Requirements

Outsourcing to a reliable partner ensures efficient scaling without compromising quality.

How Annotera Supports AI Success

At Annotera, we specialize in delivering both speech transcription and advanced audio annotation services tailored to AI-driven applications.

As a trusted data annotation company, we offer:

-

End-to-end data annotation outsourcing

-

High-quality, multilingual datasets

-

Custom workflows for diverse industries

-

Scalable solutions for startups and enterprises

Our expertise as an audio annotation company ensures that your AI models are trained on rich, accurate, and context-aware data—maximizing performance and reliability.

Conclusion

Audio annotation and speech transcription are both essential components of AI development, but they serve different purposes. While transcription focuses on converting speech into text, annotation provides the contextual depth needed for advanced AI capabilities.

The real question is not which one is better—but which one aligns with your project goals.

For simple language-based applications, transcription may be enough. But for complex, real-world AI systems, audio annotation becomes indispensable. In most cases, a hybrid approach delivers the best results.

By partnering with an experienced provider like Annotera, businesses can leverage the full potential of both approaches—ensuring their AI models are accurate, scalable, and future-ready.

- Art & Craft

- Causes & Effect

- Dance & Music

- Health & Fitness

- Food & Wellness

- Historic Places

- Homes & Gardening

- Literature & Knowledge

- Science and Technology

- Social Networking

- Social Commerce

- Party & Celebration

- Religion & Festivals

- Shopping & Vendors

- Sports & Games

- Film & Theater

- Digital Creators & Community

- Influencer CCC

- Corporate & Collaboration

- Startup & Scope

- Investment & Growth

- VC & Angel Investors

- Agriculture & farmers

- Nature & Universe

- News & Media

- Real Estate & Property

- Artificial Intellegence

- Political Coverage

- Winners & Loosers